From Copy-Paste to Skill: What Thousands of AI Coding Sessions Taught Me About Guardrails

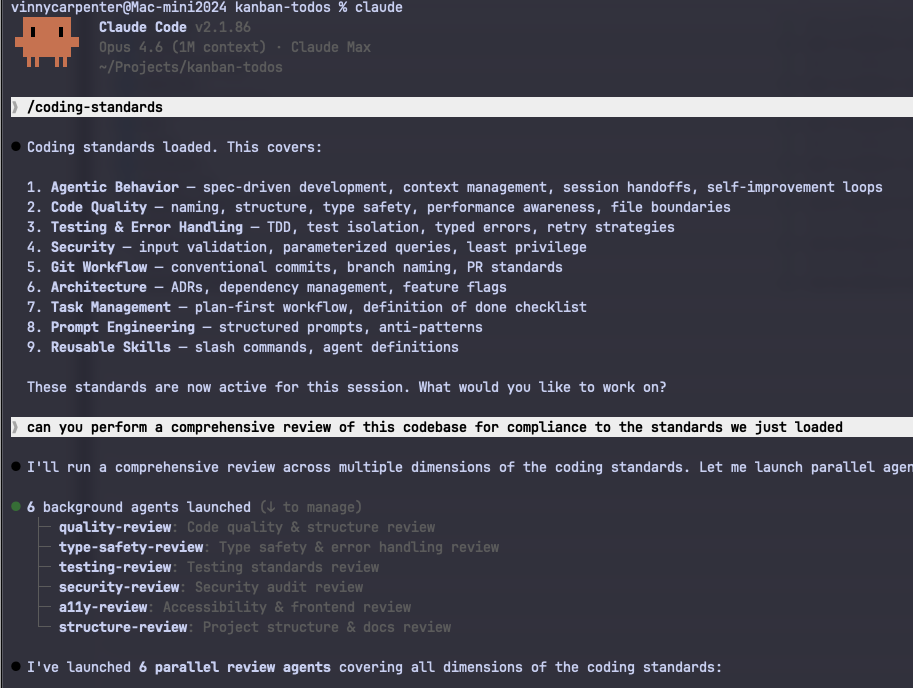

After thousands of sessions with Claude Code, Codex, Kiro, and every other LLM-based CLI and IDE, I distilled what I learned into a reusable Claude Skill. Here's how those lessons became the guardrails that let me move faster and actually trust the output.

From Copy-Paste to Skill: What Thousands of AI Coding Sessions Taught Me About Guardrails

About a year ago, I had a text file.

Maybe forty lines. Pasted into Claude Code at the start of every session. "Don't forget to run the tests." "Use conventional commits." "Please don't rewrite my entire codebase when I ask you to fix a typo." The kind of guardrails you write after your AI assistant confidently ships code that breaks in ways you didn't anticipate.

That file became a markdown doc. The doc became a system. And now, after thousands of sessions across Claude Code, Codex, Kiro, and every LLM-based CLI and IDE I could get my hands on, it's a full Claude Code Skill (version 10.0) that I'm sharing publicly.

The real story isn't the version number. It's what those sessions taught me about how to make these tools trustworthy, not just fast.

The Problem Isn't the Model

Here's the thing nobody says clearly: the AI tools aren't the problem. I've used Claude Code, Codex, and Kiro extensively. I've run the Copilot integrations, the IDE plugins, the agentic workflows. Each has real strengths. Each has the same failure mode.

They're fast. Impressively, dangerously fast. And without direction, that speed works against you.

The tools are not interchangeable, and I want to be precise about that. Claude Code has persistent memory via CLAUDE.md, which means corrections compound over time. Kiro has spec-driven agents baked into its core workflow. Copilot lives in the editor and has no shared project context by default. The mechanics differ. But the failure mode is identical across all of them: they'll produce code that works technically and misses the point entirely.

Early on, I'd start a session, ask for something, and get back code that worked. Technically. It wouldn't match the patterns in my codebase. It wouldn't have tests. It would reach for an unfamiliar library when the standard library had a perfectly good solution. It would "finish" without committing, and when the context window ran out, the work evaporated.

The problem wasn't the model. The problem was me. I hadn't told it how we do things.

What "Fast and Wrong" Actually Costs

Before I get to the solution, let me be direct about the tradeoffs, because I don't want to oversell this.

Adding guardrails has a cost. The skill adds context overhead to every session. More tokens, slightly longer startup. The PostToolUse hooks add a formatting step on every file write. If you're on a tight token budget or optimizing for raw session startup time, you'll feel it.

What you get in return is harder to measure precisely, but real. Across more than a dozen projects and multiple teams over the past year, the outcomes were consistent: fewer review cycles because the code matched our patterns on the first pass, faster onboarding because new engineers got the same guardrails automatically, and (the one I didn't fully anticipate) PR reviewers started trusting AI-assisted contributions in a way they hadn't before.

That last one matters more than the speed. Speed is table stakes. Trust is what actually changes how your team works.

Version 1: Any Constraints Beat No Constraints

The first version was embarrassingly simple. Six rules, pasted in at the start of every session:

- Read the codebase before writing code.

- Write tests.

- Use conventional commits.

- Don't modify files outside the task scope.

- Commit frequently.

- Run the test suite after every change.

Even this was a game-changer. Not because the rules were sophisticated, but because any constraints beat no constraints when you're working with an LLM. The model wants to help. If you don't define what "helpful" means, it'll define it for you, and you won't always like the definition.

Version 2: Corrections Are the Raw Material

Every time I didn't like what I got, I added a rule. Every correction became a line item.

Claude used a TypeScript enum? "Prefer literal unions over enums." Claude wrote a 200-line function? "Functions should be 30 lines or fewer." Claude swallowed an exception silently? "Never swallow exceptions. Use typed errors."

My wife asked me one night why I was furiously typing at 11pm. I told her I was writing rules for an AI that keeps making the same mistakes. She gave me the look. You know the one.

But this was the actual breakthrough: corrections are the highest-signal input you have. Every mistake Claude made that I caught was a rule waiting to be written. Don't just fix it. Capture it. The document grew from 40 lines to 200, then 400.

I organized it into sections: agentic behavior, code quality, testing, security, git workflow. I added a "Definition of Done" checklist because Claude kept telling me things were "done" when they clearly weren't. I added a "Red Flags" section to pattern-match on recurring mistakes.

Here's a concrete example of what that looked like in practice. Before guardrails, asking Claude to add error handling to an async function would often produce something like this:

try {

const result = await fetchData();

return result;

} catch (e) {

console.log(e);

}

Silent failure, untyped catch, no re-throw. With the rule "never swallow exceptions, use typed errors, always re-throw or return a typed Result" in place, the same request produced:

try {

const result = await fetchData();

return { ok: true, data: result };

} catch (err) {

if (err instanceof NetworkError) return { ok: false, error: err };

throw err;

}

Same prompt. Different standards document. Completely different output.

Version 7: Learning from the Community

By early 2026, Boris Cherny (the creator of Claude Code) started publishing his workflow tips on Twitter. I want to be precise here about what I already had and what I learned, because the distinction matters.

I had spec-driven development, subagent delegation, the re-planning trigger, and the correction-to-rule pipeline. Those came from my own sessions.

What Boris surfaced that I hadn't considered: give Claude a domain-specific verification strategy before you start coding, not just a checklist at the end. Define how you'll prove correctness upfront, and output quality improves substantially. I was reviewing at the end. Boris built verification into the beginning.

He also showed me that PostToolUse hooks could auto-format on every file write, and PostCompact hooks could re-inject critical context when Claude compressed the conversation. Here's what a PostToolUse hook actually looks like in practice:

{

"hooks": {

"PostToolUse": [

{

"matcher": "Write|Edit",

"hooks": [{ "type": "command", "command": "npx biome format --write $FILE" }]

}

]

}

}

Every file write triggers the formatter. No discipline required. Standards enforced by automation beat standards enforced by willpower, every time.

The CLAUDE.md pattern was the one I most wished I'd arrived at myself: update it directly after every correction, and let Claude write its own rules. The @.claude pattern in PR reviews is particularly powerful. Tag Claude in a review comment, and the feedback automatically becomes a permanent rule in CLAUDE.md. The compound effect over time is significant.

One note on portability: the principles here aren't Claude Code-specific. Anywhere you can inject a system prompt, you can apply this approach. The Skill format is Claude Code-native, but the underlying logic works in any tool that accepts context at session start.

Version 10: From Document to Skill

By this point the document was solid across dozens of projects. The distribution was still manual, and that was the problem. Sometimes I'd forget to reference it. New engineers would start sessions without it.

The fix was making it a Claude Code Skill: a markdown file with YAML frontmatter that Claude Code loads automatically when the task matches the description. Once installed, you don't invoke it. It governs every session without being prompted.

The package is simple:

.claude/

skills/

coding-standards/

SKILL.md # auto-loaded for all coding tasks

commands/

qspec.md # /qspec: generate a feature spec on demand

qcheck.md # /qcheck: run a skeptical staff engineer review

The two slash commands are the only pieces requiring explicit invocation. /qspec forces spec-writing mode before implementation starts. /qcheck runs a skeptical staff engineer review against the full standards. Everything else is automatic.

What's Actually in It

Rather than walk you through every section like a README, here are the parts that changed how I work.

Agentic behavior comes first: how Claude should orient on a codebase before touching anything, when to write a spec versus implement directly, how to handle ambiguity, and when to stop and re-plan. It also defines a two-layer memory system. Project-specific learnings live in tasks/lessons.md. Persistent rules live in CLAUDE.md. Every correction writes to one of these. Nothing gets lost.

Verification-first development gets its own section because it's the highest-leverage practice in the document. Define how you'll prove correctness before writing code, with domain-specific strategies for backend, API, frontend, data, and infrastructure work. This single habit cuts rework more than any other rule in here.

The hooks section is where the skill earns its keep. PostToolUse auto-formats on every write. PostCompact re-injects critical context after conversation compaction. Stop hooks act as verification gates before Claude declares something done.

For subagents, the model selection guidance is deliberate: Haiku for read-only analysis tasks (fast, cheap, sufficient for codebase orientation), Sonnet or Opus for architecture and complex reasoning (the cost is worth it when the output matters). The logic is cost-capability tradeoff matched to task risk.

The Definition of Done is a single unified checklist: Correctness and Quality, Self-Review, Documentation and Process. One checklist, not three. If it's not on the list, it's not blocking. If it is, it's not optional.

A Word on Governance

One question I get from other engineering leaders: who owns the skill when it drifts?

The answer is the same as any shared standard: it needs an owner, a version, and a review cadence. We treat SKILL.md like a dependency. It lives in version control, changes go through PR review, and the team has a standing agenda item to surface corrections that should become permanent rules.

The @.claude PR pattern helps here. Every correction that surfaces in code review is a candidate for the standards document. When the correction recurs, it becomes a rule. When the rule is widely adopted, it goes into SKILL.md. The pipeline closes.

What Changes on Day One

If you clone the repo and drop the .claude/ directory into your project today, here's what's different by the end of your first session: Claude will read your codebase before writing anything, commit in logical increments rather than one giant blob at the end, run the test suite after each change, and tell you when it's uncertain rather than improvising. Those four behaviors alone will change the quality of the output.

The trust takes longer. But it compounds. After a few weeks of sessions where the output consistently matches your patterns, you'll notice reviewers stop flagging the same classes of issues on AI-assisted PRs. That's the signal that the guardrails are working.

The GitHub Repo

I've published the full skill, slash commands, and README on GitHub:

github.com/vscarpenter/coding-standards-skill

Clone it, drop the .claude/ directory into your project, and commit it. PRs welcome. This thing got better every time someone contributed a pattern, and I'd love to keep that going.

The recursive part of this that still gets me: I'm writing rules for an AI that writes code for me, and the AI helps me refine those rules, and the rules make the AI better, which surfaces new corrections, which become new rules. It's a strange loop. It's also just engineering. Define the standard. Capture the deviation. Raise the floor.

We're living in the future. Might as well bring some standards with us.

Share this post

Related Posts

Building StillView 3.0: Raising the Quality Bar with Claude Code and Opus 4.5

Using Claude Code and Opus 4.5 as thinking partners helped me rebuild confidence, clarity, and quality in a growing macOS codebase.

Open Weights, Real Stakes: Running Gemma 4 31B Locally

Open Weights, Real Stakes: Running Gemma 4 31B Locally Google dropped Gemma 4 yesterday. I had it running locally by last night. Here's what it actually looks like to pull a frontier-class open model...

They're Using Claude to Ship Claude (And It Shows)

Anthropic has shipped more meaningful product features in the last few weeks than most teams ship in a quarter. Projects, Dispatch, Channels, recurring tasks, new models: the pace is remarkable. My theory? They're dogfooding their own product to build the product. It's a little meta. It's a lot impressive.